DayZero

A service design discovery accelerator, and a two-year investigation into what AI changes about consulting.

What it is

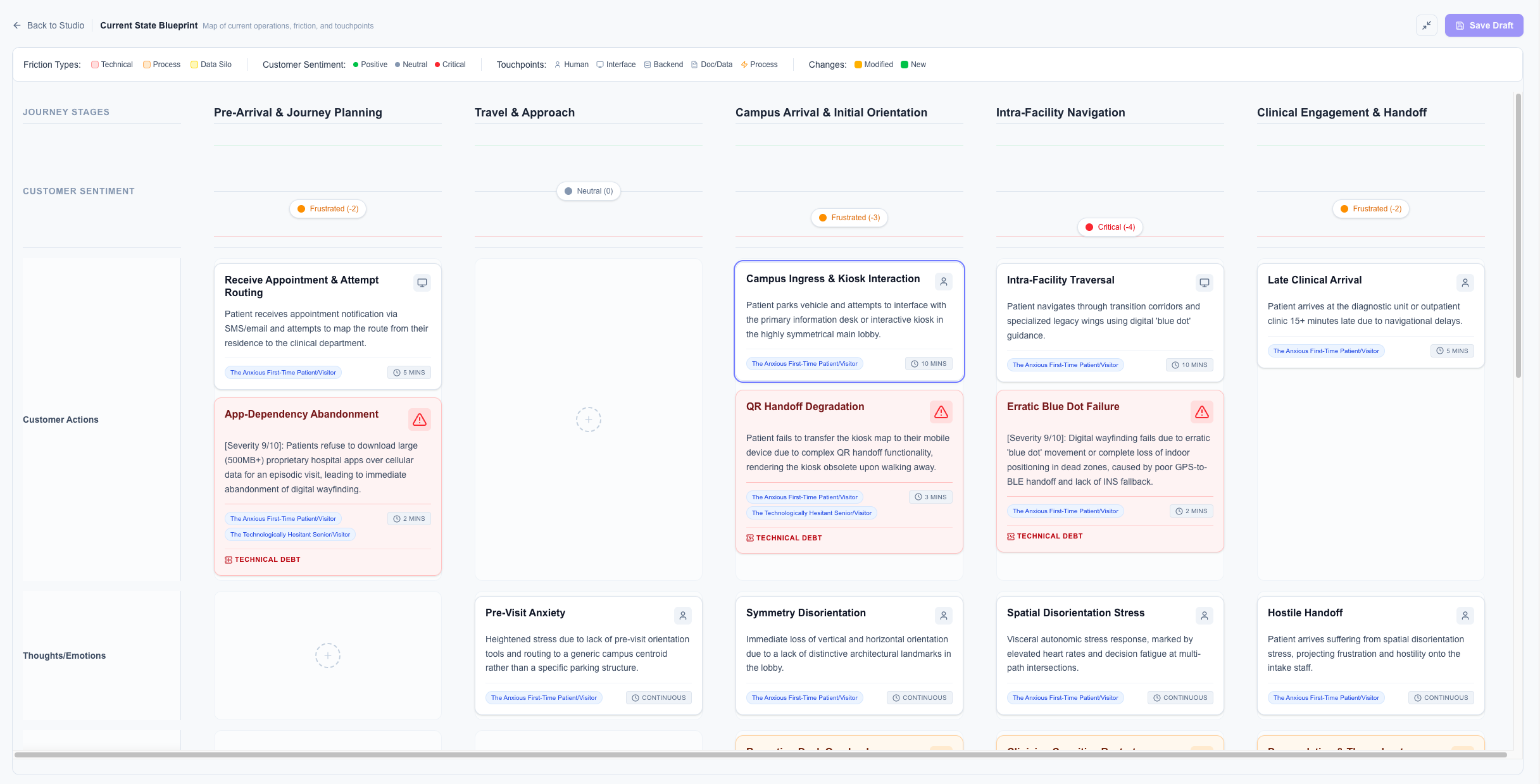

DayZero is a service design discovery accelerator. It turns the messy first weeks of an enterprise engagement — the part where a team is drowning in PDFs, interview transcripts, and incoherent stakeholder input — into structured, editable service design artifacts in a fraction of the time. Service blueprints, personas, opportunity maps, future-state proposals. Not generated and handed over, but generated and then put in front of a practitioner as a hypothesis to challenge. The tool enforces the discipline of service design at each step, refuses to skip ahead to the fun parts, and produces outputs that hold up to scrutiny because they were built under constraint. It exists because the traditional approach to discovery is expensive, exhausting, and too often produces work that's stale before it's finished. This is an attempt to fix that without losing what made service design worth doing in the first place.

Why it exists

Every major service design engagement starts the same way. A team gets dropped into a complex enterprise problem. The client hands over gigabytes of documentation, transcripts from interviews conducted by previous consultants, deck fragments, and half-finished process maps. The first three to four weeks are spent in synthesis — pulling signal out of noise, organizing friction points onto a Miro board, building a rough current-state understanding of a system nobody has ever fully mapped. This phase is called discovery. It's necessary. It's also, in its current form, expensive and slow.

By the time a team finishes discovery, a meaningful portion of the project budget has been spent just getting to the starting line. The team is tired. The client is anxious. And the "strategy" phase — the part where actual value gets created — begins with less runway than it should have.

The question that produced DayZero was narrow: what if the discovery grind could be compressed? Not eliminated — synthesis is where insight lives, and no tool can skip that — but compressed. What if a team could walk into week one with a defensible current-state hypothesis in hand, and spend the expensive phase of the project actually solving the problem instead of finding it?

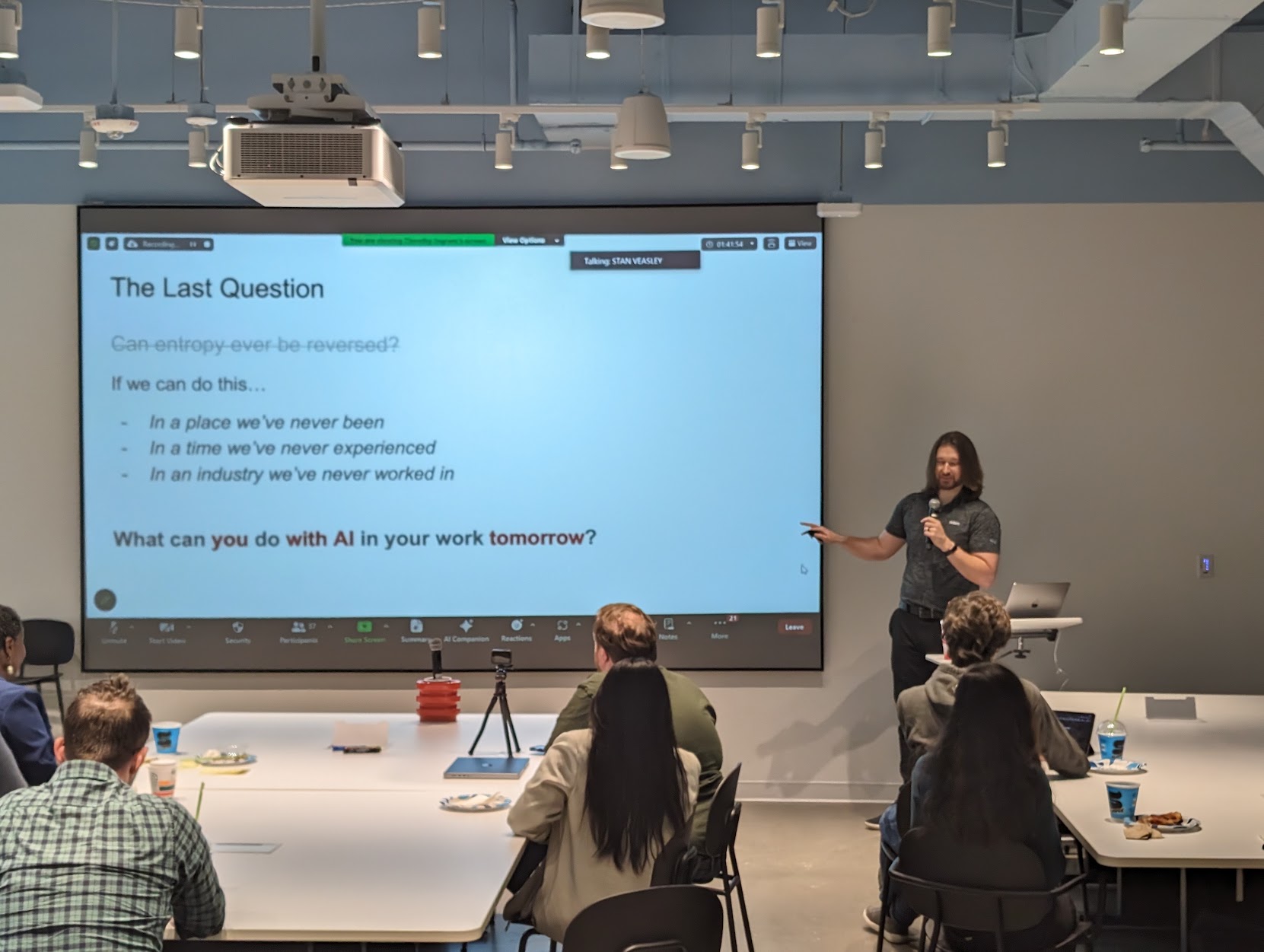

The early answer was a framework, not a tool. In 2024 I gave a talk at the Service Design Network's Dallas chapter about practical AI applications in service design. Most of the talk was demos — using LLMs to synthesize interview transcripts, generate draft personas from research, scaffold opportunity maps. Small moves. Useful, but ad hoc. Each designer had to figure out the prompts on their own, and the outputs were only as good as the person driving them.

What I kept thinking about after that talk was the gap between what good service designers could do with AI and what most of them actually did. The capability was there. The discipline wasn't. DayZero started as an attempt to encode the discipline into the tool itself.

What it had to be

The easy version of this tool is a chatbot. You upload your research, ask it questions, get summaries and draft artifacts. That version exists in a thousand forms already, and it's almost useless for serious work. The outputs are conversational, unstructured, and context-free. They collapse under pressure because they weren't built for pressure in the first place.

The hard version is software that enforces the methodology of service design at every step.

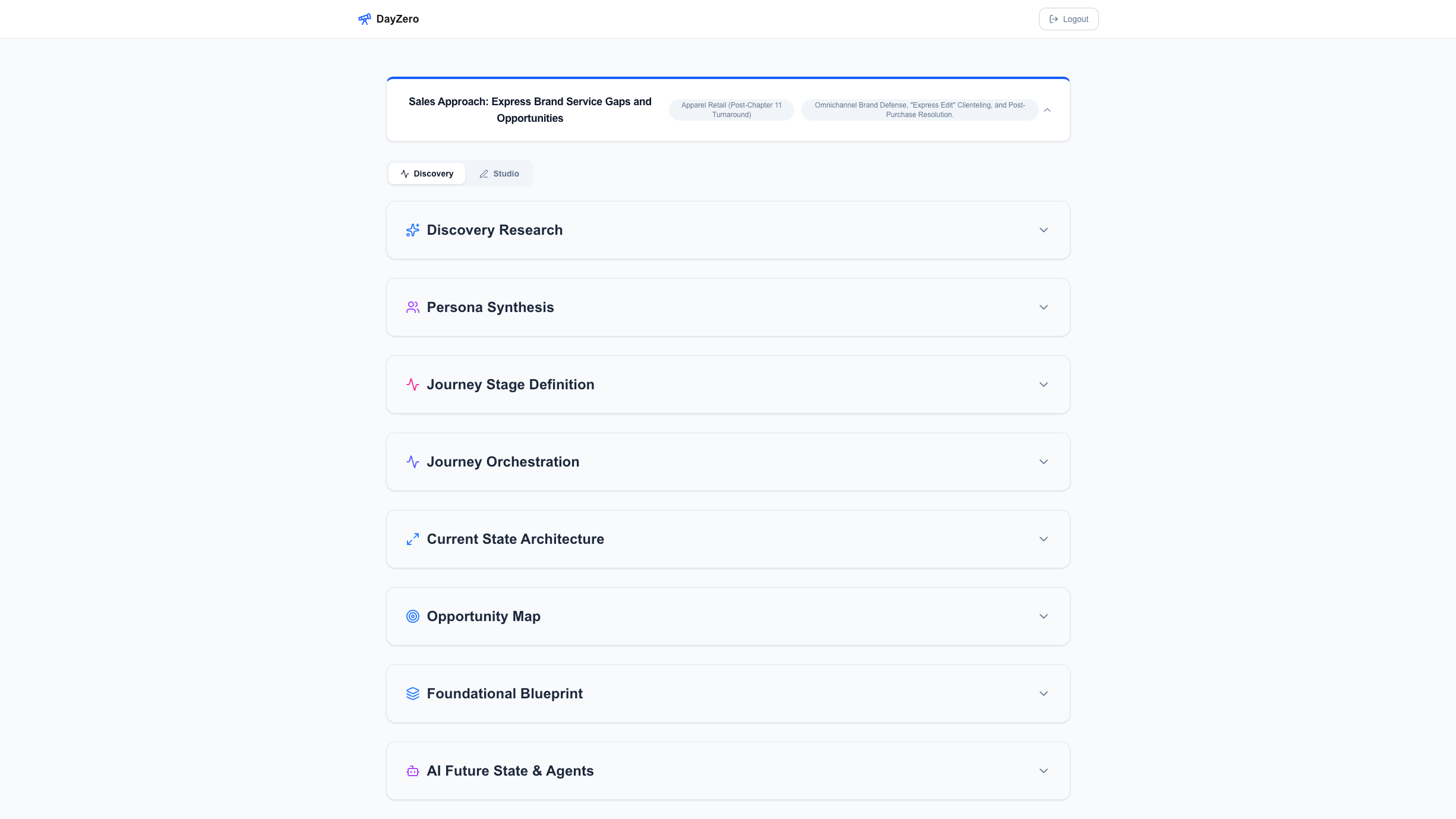

DayZero is architected as a gated, linear pipeline. It cannot skip stages. It cannot jump from raw research to a future-state vision without first producing a persona matrix, a current-state blueprint, and an opportunity map in that order. Each stage has structural requirements the output must satisfy before the next stage unlocks. Backstage operations, frontstage employee cognitive load, and customer sentiment are treated as distinct vectors that have to be accounted for separately. This isn't because LLMs can't handle them together — it's because when they handle them together, the output becomes mush, and mush doesn't hold up in front of a skeptical executive.

The harder problem, though, wasn't the AI. It was the humans using it.

There's a failure mode I started calling the Lazy Consultant trap. When a tool produces an 80% baseline fast enough, the temptation is to export it, drop it in a deck, and present it as finished work. This is the death of the craft. It's also a genuine risk when generation is cheap and time is expensive.

Every AI output is a hypothesis, not a deliverable. Design principle

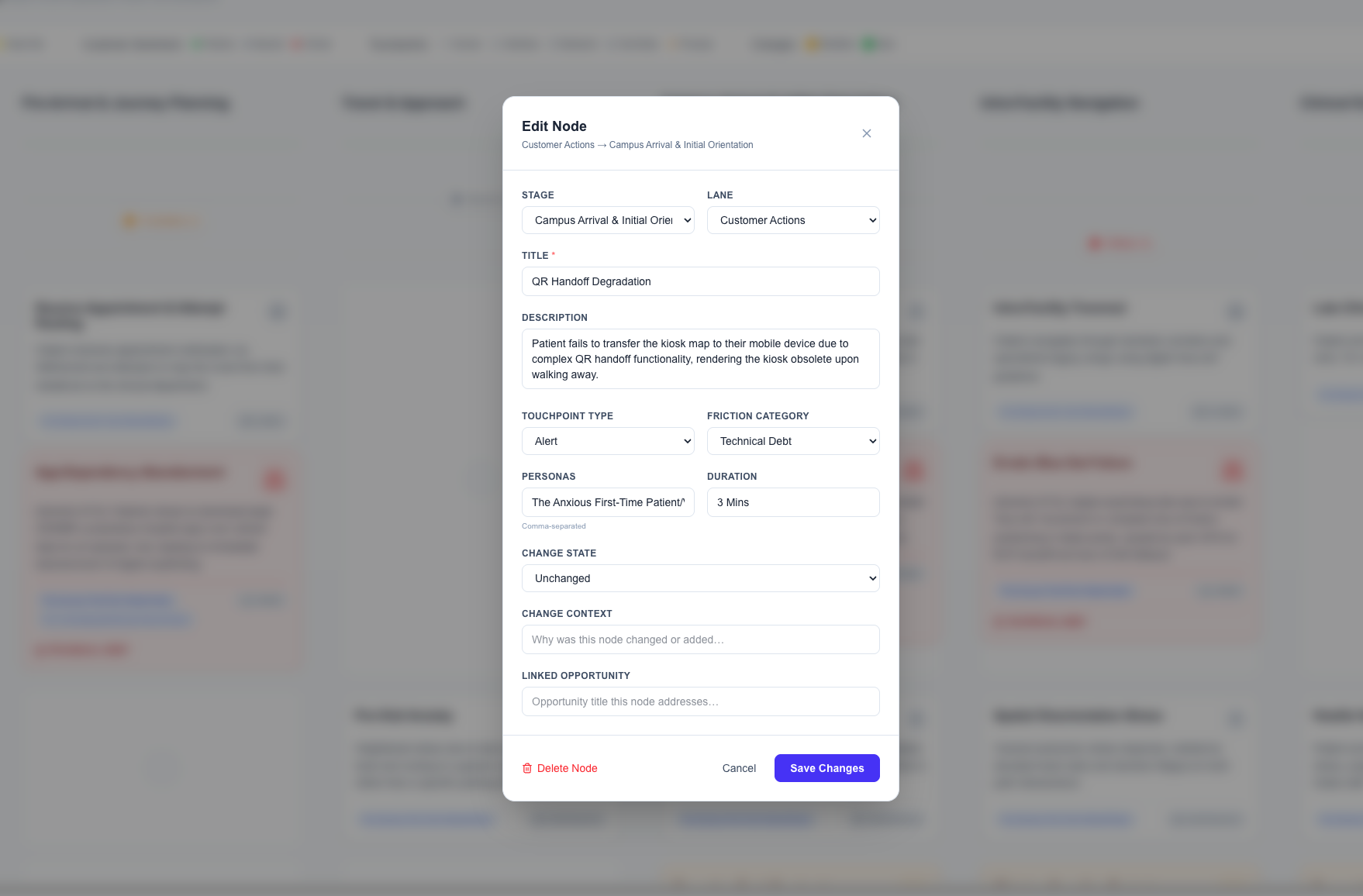

To prevent this, DayZero treats every AI output as a hypothesis, not a deliverable. The Strategy Studio — the interactive canvas where the work actually gets done — is non-destructive. The AI's proposed blueprint sits on the left. The consultant's edits sit on top, visible as a layer, tracked over time. You cannot export a clean final artifact without making manual edits. The tool requires you to pick the "Moments that Matter" yourself, anchoring the blueprint to decisions that a human has to own.

This is a constraint, and constraints slow things down. That's the point. The goal isn't to remove the consultant from the work; it's to remove the grind and leave the judgment intact. If a tool produces better work in less time and forces the practitioner to engage with it critically, it protects the craft. If it produces work that can be exported untouched, it erodes the craft. These are different tools even if they look similar from the outside.

DayZero is the first kind.

What building it taught me

The thing I didn't expect, and that changed how I think about AI in design work generally, is how much the model wants to skip to the interesting part.

Early versions of DayZero had a persistent bias. When asked to propose a future state, the model would reach immediately for AI-powered solutions. Agentic workflows. LLM-driven customer interactions. Generative personalization. All of it plausible, some of it good, none of it grounded in the actual operational reality of the client. It was solving problems with futuristic capabilities before it had understood what was broken in the basics.

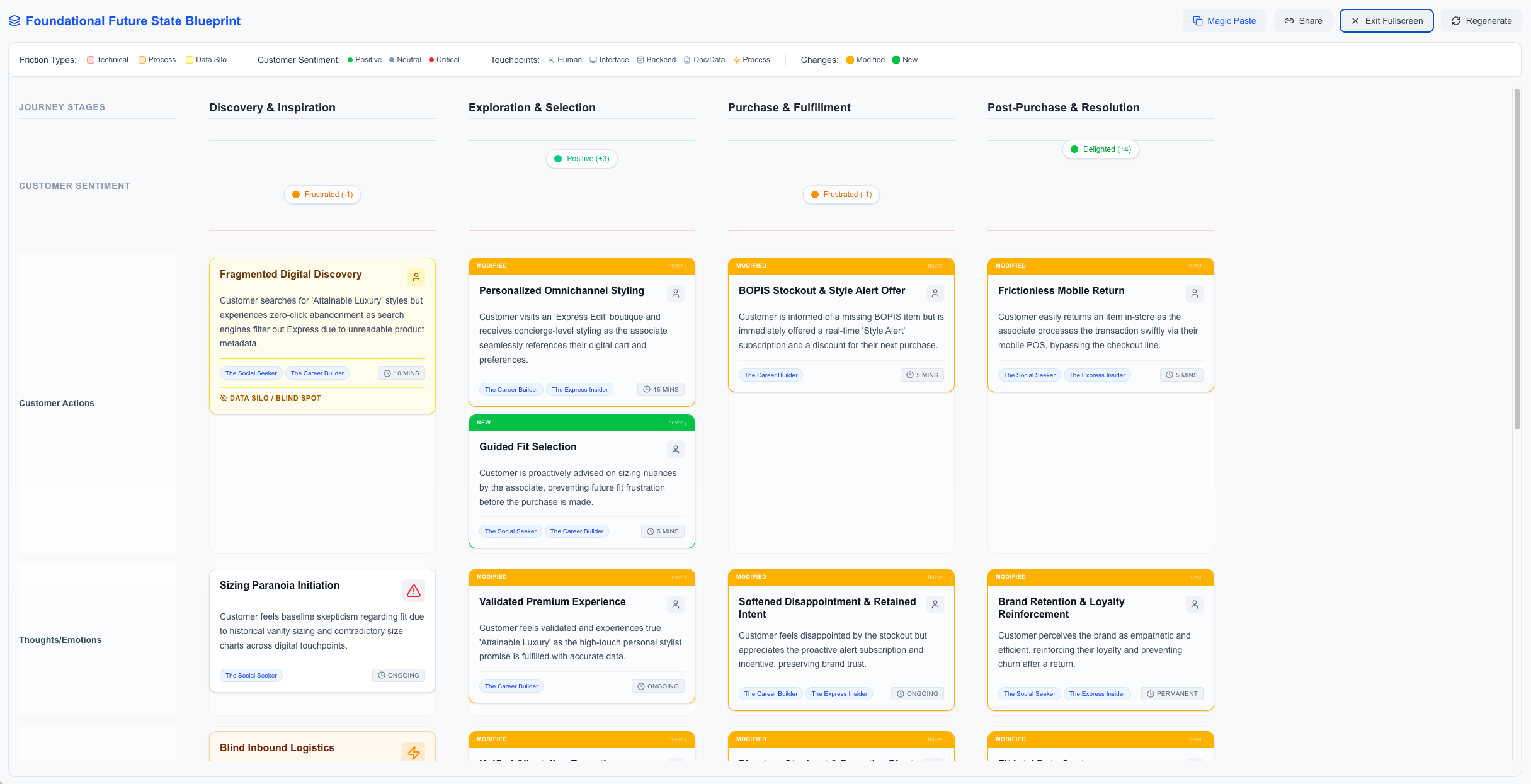

The fix was architectural. Before the tool is allowed to propose any AI-enabled future state, it's required to generate what I started calling the Foundational Future State: an opportunity map grounded entirely in People, Process, and Technology. Basic plumbing. Does the physical-to-digital handoff work? Are the right people in the room when decisions get made? Does the CRM actually talk to the scheduling system? You can't build a sophisticated AI-driven vision on top of a broken manual process. The tool has to prove it understands the baseline before it's allowed to dream.

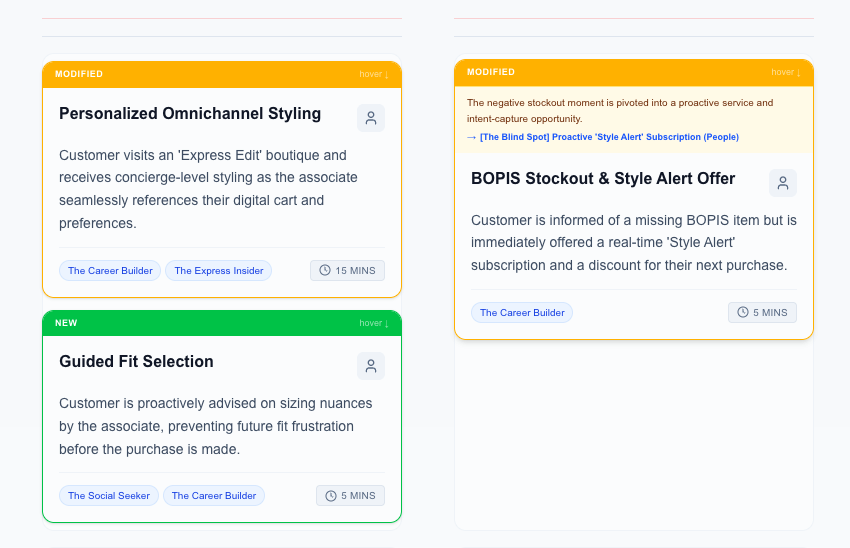

The second fix was the delta. Once a Foundational Future State exists, the tool produces a diff — a visual representation of exactly where the proposed future differs from the current state, and which friction point each change resolves. Not a shopping list of improvements. A traceable argument: this change fixes that problem. If a proposed change doesn't map to a documented friction point, it doesn't ship.

This sounds obvious when written down. It wasn't obvious in practice. Most AI tools in this space are optimizing for impressiveness — the more ambitious the output, the better the demo. DayZero optimizes for defensibility. The output has to survive contact with an enterprise architect who's been maintaining the current system for fifteen years and doesn't want to hear about AI.

What I learned building this, more than anything else, is that the interesting work in AI-enabled design isn't the AI part. It's the constraints you put around it. The model is powerful enough. What it needs is structure, discipline, and a clear view of what problem it's actually solving. Most of what I did building DayZero was saying no to things the model wanted to do.

That turns out to be most of the job.

Where it is now

DayZero is in active use inside Slalom with a small group of early adopters. Leadership has been supportive. The Service Design Center of Excellence is figuring out how to bring it into the secure environment so it can be used on client work at scale. I co-facilitated two sold-out workshops on AI and service design at the Service Design Global Conference in Dallas in 2025, where the methodology behind DayZero was the backbone of both sessions.

The use case that surprised me is that DayZero turns out to be as useful before a project starts as during it. The original design assumed it would be used after a team won an engagement, to compress the discovery phase. What's happened in practice is that teams are using it to win engagements — feeding public market research and known client pain points into the pipeline before a pitch, then walking into the first meeting with a surgically specific hypothesis about what's broken and where. It reframes the sales conversation from "we'd like to help you figure it out" to "we think this is what's happening, and here's how we know."

It's still in beta. The output quality varies depending on the input quality, which is true of any tool but worth saying. The security work to make it usable on client data is not finished. The methodology has been validated in about a dozen engagements at this point, which is enough to know it works and not enough to claim it's proven at scale.

What I'm working on now is the scaffolding around it — the training, the documentation, the patterns for how a team uses it without falling into the Lazy Consultant trap. The tool is the easy part. Making it usable by other people is the hard part.

If you're working on problems in this space, I'd like to hear about it. Say hi.